The Monetary Authority of Singapore (MAS) ordered BSI Bank Singapore to shut down recently on 24 May 2016. This serious action is due to their compliance oversight in money laundering which resulted in a criminal case.

This is also a stark reminder of the compliance challenges that financial institutions are facing today. They had attracted millions of dollars of regulatory fines in the aftermath of the 2008 Global Financial Crisis.

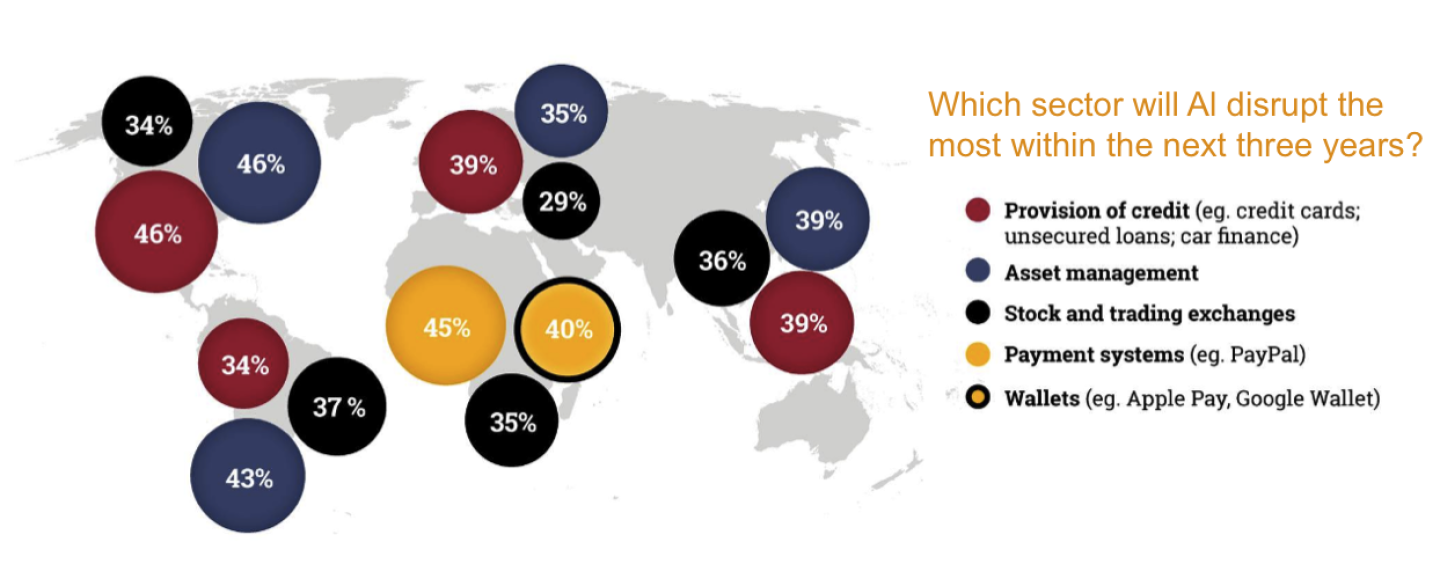

A distinguished law firm, Baker and McKenzie, commissioned a survey of 424 senior executives from financial institutions. The survey is titled Ghosts in the machine: Artificial intelligence, risks and regulation in financial markets released in June 2016.

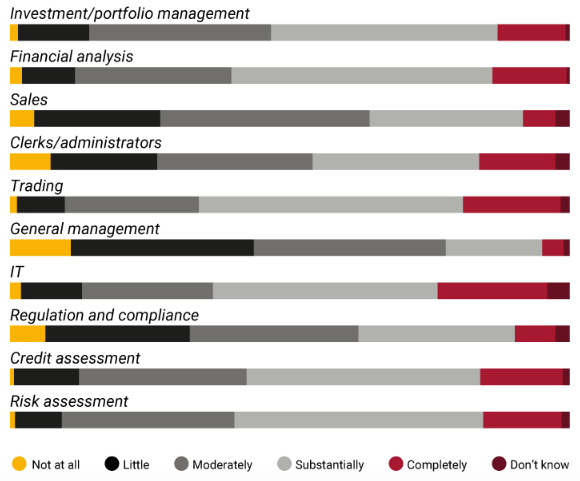

How much do you think the following financial service functions will be changed by AI and machine-learning technology over the next 3 years?

These senior executives acknowledged the competitive advantage that AI brings for them in terms of business capabilities and also compliance. This can apply to areas such as trading, credit assessment for business loans and investment management. However, they are also wary of the dangers of AI and here are three significant worries faced by financial institutions.

Fear of malfunctioning algorithm

Despite their advantages, these AI are based on algorithm that are in experimental stages. Hence, these financial institutions are not sure if the algorithms will work well under conditions of stress. Unlike human errors, these malfunctioning algorithms may create poor quality of data on a large scale before they are being discovered and remedied.

For instance, a major US trader, Knight Capital, lost US$440 million after its AI software malfunctioned in 2012. The complexity of their algorithms is such that no one can really understand it. For financial institutions to profit from trading, they need to have the first mover advantage. Therefore they are under pressure to deliver their algorithms fast to market. This means they have less time to test the integrity of the algorithms.

If financial institutions will want to save cost in terms of regulatory fines and make money, they will also have to bear the risk of a malfunctioning software that might cost their investors significant losses. Hence, financial institutions are in a difficult position.

Security concerns over regulatory demand for source codes

One of the proposed strategies by US and European regulators would be for financial institutions to hand over their AI source codes. Financial institutions spend significant amount of money to develop their source codes and they do not trust the authorities to safeguard their intellectual property.

They noted that a group of Chinese hackers managed to steal the personal records of 21 million US federal employees including the personal records of the regulators themselves in 2014. If the financial regulators can’t even safeguard their own personal records, the financial institutions don’t think that they can be entrusted with their valuable intellectual properties. The cyber security threats means that they will have to engage a strong web developer to safeguard their online presence.

Legal risks over the AI

There are at least two avenues of legal risks for financial institutions that use AI. First, there is the risk that malfunctioning algorithms might result in significant losses for investors. The investors would then launch costly lawsuits against the fund managers. Next, as AI collects more data, there will be more disputes over the ownership of intellectual properties between financial institutions.

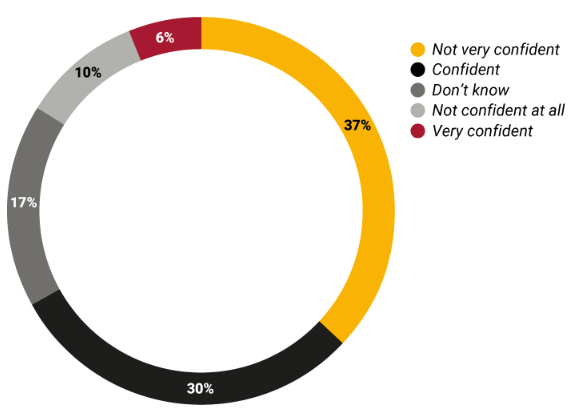

How confident are you that all other risks associated with new financial technologies have been properly understood by your organisation?

A significant 47% of senior executives are not confident that their organizations are prepared for the legal risks involved. This will mean that there is a significant lead time before the financial institution’s board of directors are comfortable with the large scale implementation of AI in their organizations. They will probably have to search for the right lawyers who can do the proper legal risk assessment first.

Conclusion

While financial institutions are excited about the enhanced capabilities that AI would bring about for their organizations, these fears are stopping them from fully embracing AI. Human supervision over AI is needed as they roll out AI progressively. This will also change the nature of the jobs market with these financial institutions. Traditional roles like traders would be reduced while new roles like algorithm validators would be created. Despite the advances of AI, there will not be significant changes over night. However, ten years from now, the financial landscape could be vastly different with the steady improvement and implementation of artificial intelligence.

Images from http://www.euromoneythoughtleadership.com/ghostsinthemachine/